[ad_1]

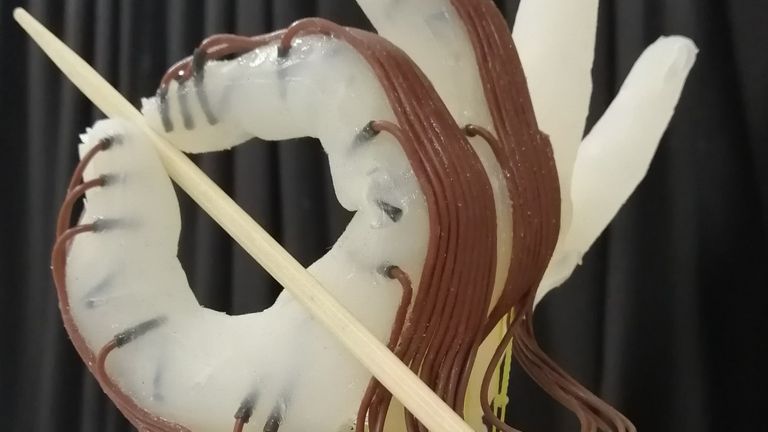

From holding a ball to daintily greedy a chopstick, a brand new robotic hand developed by scientists within the UK can seize a variety of objects simply by transferring its wrist and the sensation of its “pores and skin”.

The 3D-printed appendage is designed to be low-cost and energy-efficient, able to finishing up complicated actions regardless of not with the ability to use every finger independently.

Professor Fumiya Iida, of the College of Cambridge‘s Bio-Impressed Robotics Laboratory, mentioned the purpose was to “simplify the hand as a lot as attainable”.

Most superior robots able to feats just like the human hand have absolutely motorised fingers, making them tougher and costly to provide.

However this cheaper various has proved remarkably succesful throughout greater than 1,200 exams – together with realizing how a lot stress to use to a given object.

Extra science and tech information:

AI-generated newsreader debuts

China gets another ChatGPT rival

‘Robotic pores and skin’ helps choose wanted pressure

When you ought to instinctively know to softly deal with an egg with out shattering it and ruining breakfast, robots would require coaching to recognise the correct quantity of pressure required.

On this case, researchers implanted the hand with sensors so it may sense what it was touching.

It used trial and error to study what sorts of grip would achieve success – beginning with balls after which transferring on to every little thing from peaches and bubble wrap to a pc mouse.

Research co-author Dr Thomas George-Thuruthel, now of College Faculty London, mentioned the sensors have been “type of just like the robotic’s pores and skin”.

“We won’t say precisely what info the robotic is getting,” he added, “however it could actually theoretically estimate the place the item has been grasped and with how a lot pressure.”

The robotic also can predict whether or not it was going to drop an object, and adapt accordingly.

Researchers hope the robotic hand may very well be improved additional, resembling including laptop imaginative and prescient capabilities and educating it to take advantage of its environment to know a wider vary of objects.

The outcomes are reported within the journal Superior Clever Programs.

Source link